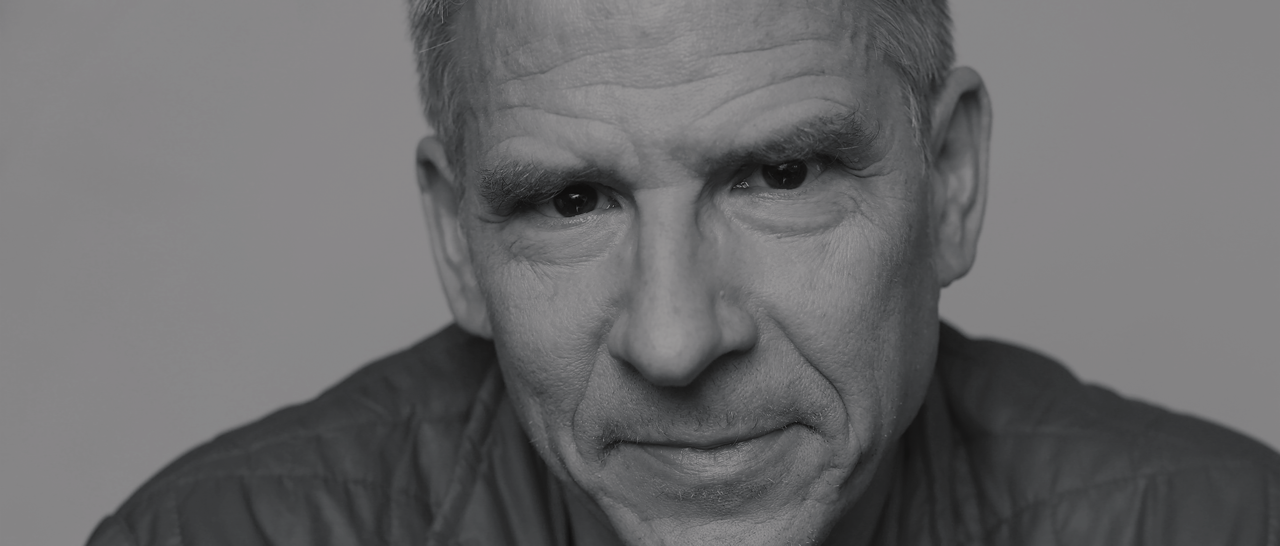

Photo courtesy of the Allen Institute.

Consciousness is a thriving industry. It’s not just the meditation retreats and ayahuasca shamans. Or the conferences with a heady mix of philosophers, quantum physicists, and Buddhist monks. Consciousness is a buzzing business in neuroscience labs and brain institutes. But it wasn’t always this way. Just a few decades ago, consciousness barely registered as a credible subject for science.

Perhaps no one did more to legitimize its study than Francis Crick, who launched a second career in neurobiology after cracking the genetic code. In the 1980s Crick found a brilliant collaborator in the young scientist Christof Koch. In some ways, they made an unlikely team. Crick, a legend in science, was an outspoken atheist, while Koch, 40 years younger, was a Catholic yearning for ultimate meaning. Together, they published a series of pioneering articles on the neural correlates of consciousness until Crick died in 2004.

What’s the Buzz: Bees have all the complicated brain components that humans have, but in a smaller package. “So yes, I do believe it feels like something to be a honey bee,” Christof Koch says. Photo from Pixabay.

Koch went on to a distinguished career at Caltech before joining the Allen Institute for Brain Science in Seattle. Today, as the president and chief scientific officer, he supervises several hundred scientists, engineers, and informatics experts trying to map the brain and figure out how our neural circuits process information. The Institute made news with the discovery of three giant neurons connecting many regions of the mouse brain, including one that wraps around the entire brain. The neurons extend from a set of cells known as the claustrum, which Crick and Koch maintained could act as a seat of consciousness.

Koch is one of the great thinkers about consciousness. He has a philosophical frame of mind and jumps readily from one big idea to the next. He can talk about the tough ethical decisions regarding brain-impaired patients and also zoom out to give a quick history of Christian thinking on the soul. In our conversation, he ranged over a number of far-out ideas—from panpsychism and runaway artificial intelligence to the consciousness of bees and even bacteria.

You’ve said you always loved dogs. Did growing up with a dog lead to your fascination with consciousness?

I’ve wondered about dogs since early childhood. I grew up in a devout Roman Catholic family, and I asked my father and then my priest, “Why don’t dogs go to heaven?” That never made sense to me. They’re like us in certain ways. They don’t talk, but they obviously have strong emotions of love and fear, hate and excitement, of happiness. Why couldn’t they be resurrected at the end of time?

Are scientific attitudes about animal consciousness simplistic?

The fact is, I don’t even know that you’re conscious. The only thing I know beyond any doubt—and this is one of the central insights of Western philosophy—is Cogito ergo sum. What Descartes meant is the only thing I’m absolutely sure of is my own consciousness. I assume you’re conscious because your behavior is similar to mine, and I could see your brain if I put you in an MRI scanner. When you have a patient who’s locked-in, who can’t talk to me, I have to infer it. The same with animals. I can see they’re afraid when it’s appropriate to be afraid, and they display all the behavioral traits of being afraid, including the release of hormones in their bloodstream. If you look at a piece of dog brain or mouse brain and compare that to a piece of human brain the same size, only an expert with a microscope can tell for sure that this is a dog brain or a human brain. You really have to be an expert neuroanatomist.

We share much of our evolutionary history with dogs and even dolphins. But what about lizards or ants? What about bacteria? Can they be conscious?

It becomes progressively more difficult. The brain of a bird or a lizard has a very different evolutionary history, so it becomes more difficult to assert without having a general theory. Ultimately, you need a theory that tells us which physical systems can be conscious. By the time you get to a worm, let alone to bacteria, you can believe that it feels like something to be a worm because that’s ultimately what consciousness is. If it feels like something to be a worm, then it’s conscious. Right now, most people believe it doesn’t feel like anything to be my iPhone. Yet it may well be true that it feels like something to be a bee. But it’s not easy to test that assertion in a scientific way.

What do you mean when you say “it feels like something?”

It feels like something to be you. I can’t describe it to you if you’re a zombie. If you were born blind, I can never describe what it means to see colors. You are simply unable to comprehend that. So it is with consciousness. It’s impossible to describe it unless you have it. And we have these states of consciousness unless we are deeply asleep or anesthetized or in a coma. In fact, it’s impossible not to be conscious of something. Even if you wake up discombobulated in a dark hotel room, you’re jet-lagged and your eyes are still closed, you are already there. Before there was just nothing, nada, rien. Then slowly some of your brain boots up and you realize, “Oh, I’m here. I’m in Beijing and I flew in last night.” The difference between nothing and something is a base-level consciousness.

Is this self-awareness?

It’s even much simpler. I might not even know who I am when I’m waking up. It takes time to boot up and realize who you are, where you are, what time of day it is. First, you open your eyes and just see darkness. Darkness is different from nothing. It’s not that I see darkness behind my head; I just don’t see at all. That’s what consciousness is. It’s a basic feeling.

We might be surrounded by consciousness everywhere and find it in places where we don’t expect.

You said bees could be conscious. They do amazing things, and yet they have tiny brains.

Yes, they do very complicated things. We know that individual bees can fly mazes. They can remember scents. They can return to a distant flower. In fact, they can communicate with each other, through a dance, about the location and quality of a distant food source. They have facial recognition and can recognize their beekeeper. Under normal conditions, they would never sting their beekeeper; it’s probably a combination of visual and olfactory cues.

Their brains contain roughly a million neurons. By comparison, our brains contain about 100 billion, so a hundred thousand times more. Yet the complexity of the bee’s brain is staggering, even though it’s smaller than a piece of quinoa. It’s roughly 10 times higher in terms of density than our cortex. They have all the complicated components that we have in our brains, but in a smaller package. So yes, I do believe it feels like something to be a honey bee. It probably feels very good to be dancing in the sunlight and to drink nectar and carry it back to their hive. I try not to kill bees or wasps or other insects anymore.

You’re talking about the consciousness of an individual bee—not the hive, which has another level of complexity.

I’m talking about the potential for sentience in individual bees. Would we exclude them because they can’t talk? Well, lots of people can’t talk. Babies can’t talk, impaired patients can’t talk. Because they don’t have a human brain? Well, that’s completely arbitrary. Yes, their evolution diverged from us 250 million years ago or so, but they share with us a lot of the basic metabolism and machinery of the brain. They have neurons, ionic channels, neurotransmitters, and dopamine just like we have.

So brain size is not the key factor in consciousness?

That’s entirely correct. In fact, there’s no principal reason to assume that brain size should be the be-all and end-all of consciousness.

We also know Neanderthals had bigger brains than the Homo sapiens who lived near them in Europe. Yet we survived and they didn’t.

Their brain was maybe 10 percent larger than our brain. We don’t know why we survived. Did we just outbreed them? Were we more aggressive? There’s some research showing that dogs play a role here. At the same time when Homo neanderthalensis became extinct—around 35,000 years ago—Homo sapiens domesticated the wolf and they became the two apex hunters. Homo sapiens and wolves/dogs started to collaborate. We became this ultra-efficient hunting cooperative because we now had the ability to be much more efficient at hunting down prey over long distances and exhausting them. So the creature with the larger brain didn’t survive and the one with the smaller brain did.

Fatal Intelligence: Given the probable existence of trillions of planets, why haven’t we detected life elsewhere? It’s likely, Christof Koch says, that sufficiently complex and intelligent life would destroy itself. Photo from NASA.

Why were humans able to create civilizations that have transformed the planet?

We don’t have a precise answer. We have big brains and are, by some measure, the most intelligent species, at least in the short term. We’ll see whether we’ll actually survive in the long term, given our propensity for mass violence. And we’ve manipulated the planet to such an extent that we are now talking about entering a new geological age, the Anthropocene. But it’s unclear why whales or dolphins—some of which have bigger brains and more neurons in their cortex than we do—why they are not called smarter or more successful. Maybe because they have flippers and live in the ocean, which is a relatively static environment. With flippers, you’re unable to build sophisticated tools. Of course, human civilization is all about tools, whether it’s a little stone, an arrow, a bomb, or a computer.

So hands are crucial for their ability to manipulate tools.

You need not only a brain, but also hands that can manipulate the environment. Otherwise, you can think about the world but you can’t act upon it. That’s probably why this particular species of primate excelled and took over the planet.

There are fascinating questions about how deep consciousness goes. You’ve embraced the old philosophy of panpsychism. Isn’t this the idea that everything in nature has some degree of consciousness or mind?

Yes, there’s this ancient belief in panpsychism: “Pan” meaning “every,” “psyche” meaning “soul.” There are different versions of it depending on which philosophical or religious tradition you follow, but basically it meant that everything is ensouled. Now, I don’t believe that a stone is ensouled or a planet is ensouled. But if you take a more conceptual approach to consciousness, the evidence suggests there are many more systems that have consciousness—possibly all animals, all unicellular bacteria, and at some level maybe even individual cells that have an autonomous existence. We might be surrounded by consciousness everywhere and find it in places where we don’t expect it because our intuition says we’ll only see it in people and maybe monkeys and also dogs and cats. But we know our intuition is fallible, which is why we need science to tell us what the actual state of the universe is.

The Internet and runaway AI will not have our value system. It may not care at all about humans. Why should it?

Most scientists would dismiss panpsychism as ancient mythology. Why does this idea resonate for you?

It’s terribly elegant in its simplicity. You don’t say consciousness only exists if you have more than 42 neurons or 2 billion neurons or whatever. Instead, the system is conscious if there’s a certain type of complexity. And we live in a universe where certain systems have consciousness. It’s inherent in the design of the universe. Why is that so? I don’t know. Why does the universe follow the laws of quantum mechanics? I don’t know. Can I imagine a universe where the laws of quantum mechanics don’t hold? Yes, but I don’t happen to live in such a universe, so I believe our universe has certain types of complexity and a system that gives rise to consciousness. Suddenly the world is populated by entities that have conscious awareness, and that one simple principle leads to a number of very counterintuitive predictions that can, in principle, be verified.

So it all comes down to how complex the system is? And for the human brain, how its neurons and synapses are wired together?

It comes down to the circuitry of the brain. We know that most organs in your body do not give rise to consciousness. Your liver, for example, is very complicated, but it doesn’t seem to have any feelings. We also know that consciousness does not require your entire brain. You can lose 80 percent of your neurons. You can lose the little brain at the back of your brain called the cerebellum. There was a 24-year-old Chinese woman who discovered, when she had to get a brain scan, that she has absolutely no cerebellum. She’s one of the extremely rare cases of people born without a cerebellum, including deep cerebellar nuclei. She never had one. She talks in a somewhat funny way and she’s a bit ataxic. It took her several years to learn how to walk and speak, but you can communicate with her. She’s married and has a child. She can talk to you about her conscious experiences. So clearly you don’t need the cerebellum.

Yet the cerebellum has everything you expect of neurons. It has gorgeous neurons. In fact, some of the most beautiful neurons in the brain, so-called Purkinje cells, are found in the cerebellum. Why does the cerebellum not contribute to consciousness? It has a very repetitive and monotonous circuitry. It has 69 billion neurons, but they have simple feed-forward loops. So I believe the way the cerebellum is wired up does not give rise to consciousness. Yet another part of the brain, the cerebral cortex, seems to be wired up in a much more complicated way. We know it’s really the cortex that gives rise to conscious experience.

It sounds like you’re saying our intelligence comes from this wiring, not from some special substance in the neurons. Could a conscious system be made of something totally different?

That’s correct. There’s nothing inherently magical about the human brain. It obeys all the laws of physics like everything else in the universe. There isn’t anything supernatural that’s added to my brain or my cortex that gives rise to a conscious experience.

Is it like a computer?

A computer shares some similarities with the brain, but this is a metaphor and that can be dangerous. One is evolved, the other one is constructed. In the one case you have software and hardware. It’s much more difficult to make that distinction in the brain. I think we have to be cautious about comparisons between a brain and a computer. But in theory, a system that’s complex enough could be conscious. It may be possible that human-built artifacts would feel like something and would also experience the world.

The Internet is an extremely complex system. Could it feel happy or depressed?

If a computer or the Internet has sentience, the challenge is how we relate its conscious state to ours because its evolutionary history is radically different. It doesn’t have our senses or our reward systems. Of course, this is also a threat. The Internet and runaway AI will not have our value system. It may not care at all about humans. Why should it? We don’t care about ants or bugs. Most of us don’t even care about chickens or cows except when we want to eat them. This is a concern moving forward if we endow these entities not just with consciousness but intelligence. Is that really such a good idea?

We’re not the dominant species on the planet because we are wiser or swifter or more powerful. It’s because we’re more intelligent and ruthless. If we build intelligent systems that exceed even our intelligence, we may believe we can control them. “Oh yeah, I always have this kill-switch. Don’t worry, it’ll be OK.” Well, one day somebody’s going to say, “Oops, I didn’t want that. I didn’t mean that to happen.” And it may be our last invention.

I’m not a mystic. I’m a scientist. But this is a feeling I have. I find myself in a wonderful universe with a very positive and romantic outlook on life.

That’s the scenario in a lot of science fiction. But you really believe artificial intelligence could develop a certain level of complexity and wipe us out?

This is independent of the question of computer consciousness. Yes, if you have an entity that has enough AI and deep machine learning and access to the Cloud, etc., it’s possible in our lifetime that we’ll see creatures that we can talk to with almost the same range of fluidity and depth of conversation that you and I have. Once you have one of them, you replicate them in software and you can have billions of them. If you link them together, you could get superhuman intelligence. That’s why I think it behooves all of us to think hard about this before it may be too late. Yes, there’s a promise of untold benefits, but we all know human nature. It has its dark side. People will misuse it for their own purposes.

How do we build in those checks to make sure computers don’t rule the world?

That’s a very good question. The only reason we don’t have a nuclear bomb in every backyard is because you can’t build it easily. It’s hard to get the material. It takes a nation state and tens of thousands of people. But that may be different with AI. If current trends accelerate, it may be that 10 programmers in Timbuktu could unleash something truly malevolent onto mankind. These days, I’m getting more pessimistic about the fate of a technological species such as ours. Of course, this might also explain the Fermi paradox.

Remind us what the Fermi paradox is.

We have yet to detect a single intelligent species, even though we know there are probably trillions of planets. Why is that? Well, one explanation is it’s just extremely unlikely for life to arise and we’re the only one. But I think a more likely possibility is that any time you get life that’s sufficiently complex, with advanced technology, it has somehow managed to annihilate itself, either by nuclear war or by the rise of machines.

Just Like Heaven: “In a cathedral, I get a feeling of luminosity out of the numinous,” says Christof Koch. Gaudi’s La Sagrada Familia is seen above. “You can get that feeling without being a Catholic.” Photo from Pixabay.

You are a pessimist! You really think any advanced civilization is going to destroy itself?

If it’s very aggressive like ours and it’s based in technology. You can imagine other civilizations that are not nearly as aggressive and live more in harmony with themselves and nature. Some people have thought of it as a bottleneck. As soon as you develop technology to escape the boundary of the planet, there’s an argument that civilization will also develop computers and nuclear fusion and fission. Then the question is, can it grow up? Can it become a full-grown, mature adult without killing itself?

You have embraced Integrated Information Theory, which was developed by your colleague Giulio Tononi. What can this tell us about consciousness?

The Integrated Information Theory of consciousness derives a mathematical calculus and gives rise to something known as a consciousness meter, which a variety of clinical groups are now testing. If you have an anesthetized patient, or a patient who’s been in a really bad traffic accident, you don’t really know if this person is minimally conscious or in a vegetative state; you treat them as if they’re conscious, but they don’t respond in any meaningful way.

How can you be sure they’re conscious?

You’re never really sure. So you want a brain-based test that tells you if this person is capable of some experience. People have developed that based on this integrated information series. That’s big progress. The current state of my brain influences what happens in my brain the next second, and the past state of my brain influences what my brain does right now. Any system that has this cause-effect power upon itself is conscious. It derives from a mathematical measure. It could be a number that’s zero, which means a system with no cause-effect power upon itself. It’s not conscious. Or you have systems that are “Phi,” different from zero. The Phi measures, in some sense, the maximum capacity of the system to experience something. The higher the number, the more conscious the system.

So you could assign a number to everything that might have some degree of consciousness—whether it’s an ant, a lizard, bacteria, or a vegetative human being?

Yes, you or me, the Dalai Lama or Albert Einstein.

The higher the number, the more conscious?

The number by itself doesn’t tell you it’s now thinking, or is conscious of an image or a smell. But it tells you the capacity of the system to have a conscious experience. In some deep philosophical sense, the number tells you how much it exists. The higher the number, the more the system exists for itself. There isn’t a Turing Test for consciousness. You have to look at the way the system is built. You have to look at the circuitry, not its behavior, whether it’s a computer or a biological brain. This has now been tested and validated in many patients, including locked-in patients who are fully conscious, people under anesthesia who are not conscious, people in deep sleep, and those in vegetative states or minimal-conscious states. So the question now is whether this can be turned into something practical that can be used at every clinic in the country or the world to test patients who’ve just been in a bad traffic accident.

Obviously, there are huge implications. Do you turn off the life-support machines?

First, does the patient suffer or is nobody home anymore? In the famous case of Terri Schiavo, we could tell the brain stem was still functioning but there wasn’t anybody home. Her consciousness had disappeared 15 years earlier.

Isn’t there still the old “mind-body problem?” How do three pounds of goo in the human brain, with its billions of neurons and synapses, generate our thoughts and feelings? There seems to be an unbridgeable gap between the physical world and the mental world.

No, it’s just how you look at it. The philosopher Bertrand Russell had this idea that physics is really just about external relationships—between a proton and electron, between planets and stars. But consciousness is really physics from the inside. Seen from the inside, it’s experience. Seen from the outside, it’s what we know as physics, chemistry, and biology. So there aren’t two substances. Of course, a number of mystics throughout the ages have taken this point of view.

It does look strange if you grew up like me, as a Roman Catholic, believing in a body and a soul. But it’s unclear how the body and the soul should interact. After a while, you realize this entire notion of a special substance that can’t be tracked by science—that I have but animals don’t have, which gets inserted during the developmental process and then leaves my body—sounds like wishful thinking and just doesn’t cohere with what we know about the actual world.

It sounds like you lost your religious faith as you learned about science.

I lost my religious faith as I matured. I still look fondly back upon it. I still love the religious music of Bach. I still get this feeling of awe. In a cathedral, I can get a feeling of luminosity out of the numinous. When I’m on a mountain top, when I hear a dog howling, I still wake up some mornings and say, “I’m amazed that I exist. I’m amazed there is this world.” But you can get that without being a Catholic.

Does that experience of awe or the numinous feel religious?

Not in a traditional sense. I was raised to believe in God, the Trinity, and particularly the Resurrection. Unfortunately, I now know four words: “No brain, never mind.” That’s bad news. Once my brain dies, unless I can somehow upload it into the Cloud, I die with it. I wish it were otherwise, but I’m not going to believe something if it’s opposed by all the facts.

A few years ago, you and some other scientists spent a week with the Dalai Lama. Was that a meaningful experience?

Yes, it was. There were thousands of monks in the Drepung Monastery who were listening to our exchange. This particular Tibetan Buddhist tradition is quite fascinating. I’m not a scholar of it, but they view the mind primarily from an interior perspective. They’ve developed very sophisticated ways of analyzing it that are different from our way. We take the external way of Western science, which is independent of the observer. But ultimately, we’re trying to approach the same thing. We’re trying to approach this phenomenon of conscious experience. They have no trouble with the idea of evolution and other creatures being sentient. I found that very heartening—in particular the Dalai Lama’s insistence on the primacy of science. I asked him, “What happens if science is in conflict with certain tenets of Buddhist faith?” He laughed and said, “Well, if this belief doesn’t accord with what science ultimately discovers about the universe, then we have to throw it out.”

But the Dalai Lama believes in reincarnation.

We talked about that. In fact, I said, “Well, I’m really sorry, Your Holiness, but I think we just have to agree that Western science shows that if there’s no physical carrier, you’re not going to get a mind. You’re not going to get memory because you need some mechanism to retain the memory.” I asked him, “Were you not reincarnated from the previous Dalai Lama?” And he just laughed and said, “Well, I don’t remember anything about that anymore.”

Has this scientific knowledge helped you sort out the deep existential questions about meaning, about why we’re here?

My last book is titled Confessions of a Romantic Reductionist. I’m a reductionist because I do what scientists do. I take a complex phenomenon and try to pull it apart and reduce it to something at a lower level. I’m also romantic in the sense that I believe I can decipher the distant contrails of meanings. I find myself in a universe that seems to be conducive to life—the Anthropic Principle. And for reasons I don’t understand, I also find myself in a universe that became conscious, ultimately reflecting upon itself. Who knows what might happen in the future if we continue to evolve without destroying ourselves? To what extent can we become conscious of the universe as a whole?

I don’t know who put all of this in motion. It’s certainly not the almighty God I was raised with. It’s a god that resides in this mystical notion of all-nothingness. I’m not a mystic. I’m a scientist. But this is a feeling I have. I find myself in a wonderful universe with a very positive and romantic outlook on life. If only we humans could make a better job of getting along with each other.

Steve Paulson is the executive producer of Wisconsin Public Radio’s nationally syndicated show To the Best of Our Knowledge. He’s the author of Atoms and Eden: Conversations on Religion and Science. You can subscribe to TTBOOK’s podcast here.